Is SLM reliable? LLMs vs. SLMs: everything you need to know

LLMs vs. SLMs

LLMs vs. SLMs

LLMs vs. SLMs

By Sofía Sánchez González

When we talk about generative artificial intelligence, we are not only talking about one type of model, one type of hosting, or one form of implementation. There are many options to consider before choosing a model, and depending on what you select, the process and the experience will be different. The same applies to Large Language Models (LLMs) vs. Small Language Models (SLMs), which is why in this post we explain everything you need to know.

A few weeks ago, we talked about private LLM vs. public LLM hosting. Both options have their pros and cons when it comes to cost, control, scaling, and data privacy. That is why it is important to evaluate the differences before making a choice. So let’s start from the beginning.

What is a Small Language Model?

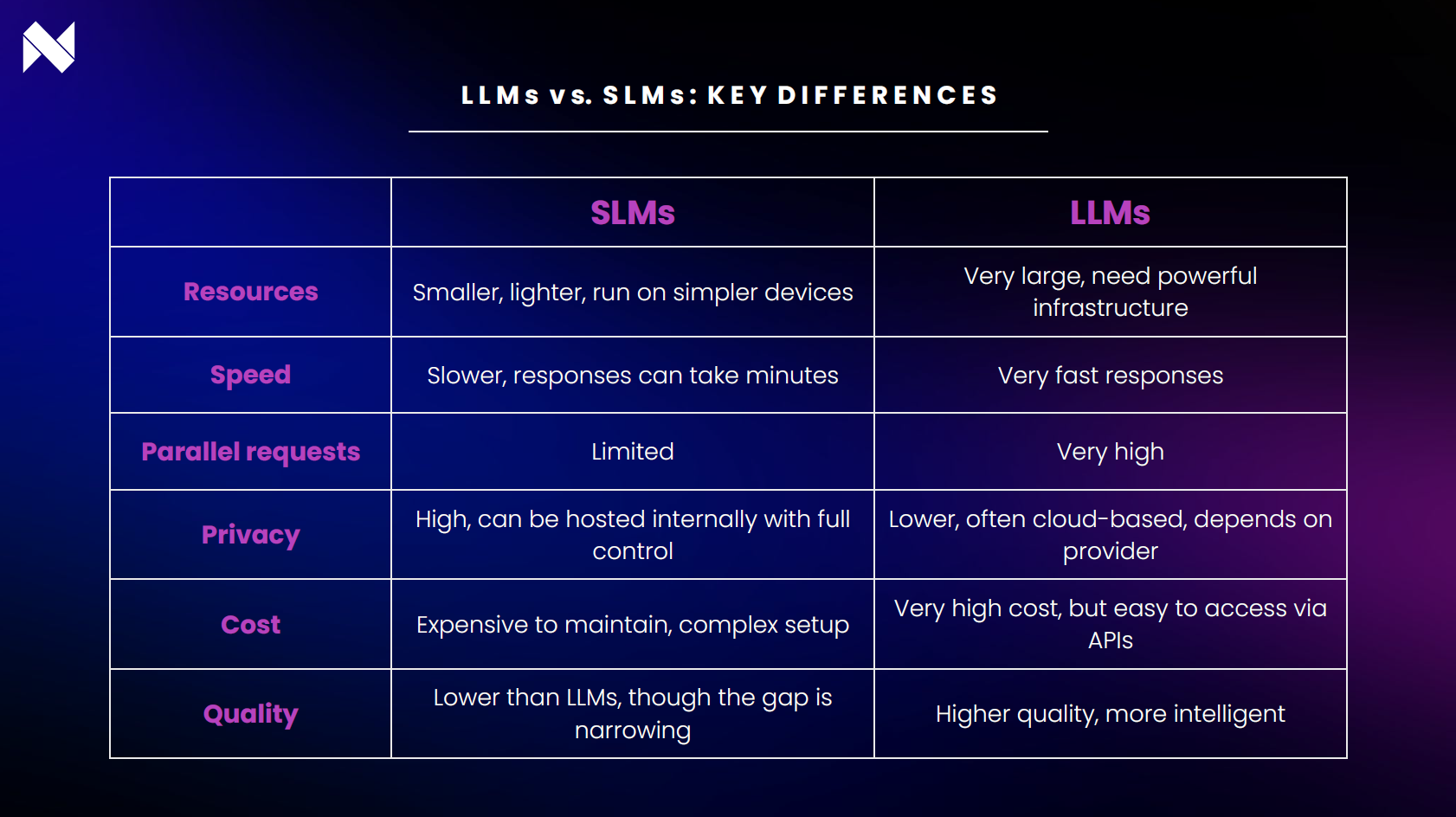

We often hear about LLMs, but not so much about their smaller counterparts. A Small Language Model (SLM) is definitionally a smaller and lighter language model than an LLM. It consumes fewer resources, can run on simpler devices, and provides faster and more efficient responses for specific tasks, although with less reach than larger models.

LLM vs SLM: key differences explained

Privacy and security in LLMs vs SLMs

SLMs are commonly implemented with respect to clients, corporations, or projects that do not wish to use LLMs for data security reasons. The pharmaceutical industry, for example, handles extremely sensitive data—such as patients’ personal and medical information, clinical trial results, confidential research, and intellectual property. This must be kept private and is very often regulated by law.

Large language models are also expensive to maintain, which is why common shared models are used. Some companies, like Amazon, for instance, claim that they will not use your data for retraining and that your information is safe.

LLM vs SLM use cases: which one should you choose?

Using an LLM is recommended for its ease and convenience. But the advantages of SLMs are several:

- We can host it ourselves.

- It stays within our network.

- Full control is possible.

But there are also disadvantages:

- The cost of maintaining an SLM is high.

- They are usually slower and take longer to generate results. LLMs are fast, but many SLMs can take around 3 minutes to generate a response (approximately 30 tokens per word). When deploying on the smallest possible machine, performance is slower, and while faster options exist, costs quickly skyrocket.

- The model isn’t as “smart”, although the gap has narrowed significantly in recent times.

- The quality of the output is a little lower than with an LLM.

- Maintaining the machine is very complicated: you need to install GPU drivers, update them, and keep up with patches—all requiring time and skilled profiles.

Are SLMs better for small tasks? Myth or reality?

Generative models that respond to a prompt are usually LLMs. Very small models (tiny models) allow retraining on very specific tasks, but with a good prompt, a general model can also give you good results. For organizations using a service through an API on platforms like AWS, security and scalability guarantees are generally provided.

The middle ground: medium language models

Think it’s only about SLMs and LLMs? Well, MLMs, the medium language models, also exist. Thanks to a technique called quantization, these models can run on more limited systems. The idea is simple: in the GPU memory, millions of parameters are stored, like spigots adjusting the flow of information. Normally, each one stores many decimals, but with quantization, those decimals are reduced and the values readjusted.

The result is that a very large model, such as one with 80 billion parameters, can run lighter and more efficiently when quantized. That is why it is often more useful to work with large quantized models than with small unquantized models. In fact, some medium models, like Deepseek or Qwen3, have gained attention thanks to their high-quality responses—even when heavily quantized.

What should you choose?

Choosing the right model really depends on your use case. At Narrativa, we don’t just talk about flexibility—we deliver it. Whether you need the efficiency of an SLM, the power of an LLM, or a seamless blend of both, our platform and team can adapt to your needs. Book a demo today and discover how our AI solutions can accelerate your workflows, cut costs, and keep you fully compliant.

Learn more about AI models.

About Narrativa

Narrativa® Agentic AI solutions unlock a faster, smarter future for life sciences organizations, helping them to efficiently produce complex, high-volume documentation for regulatory and commercialization workflows. By automating content creation, Narrativa® delivers greater speed, accuracy, and consistency—while ensuring full compliance in highly regulated environments.

The Narrativa® Navigator platform provides secure and specialized Agentic AI-powered automation features. It includes complementary user-friendly tools such as Clinical Atlas for CSR and Protocol generation, Narrative Pathway, TLF Voyager, and Redaction Scout, which operate cohesively to transform clinical data into submission-ready documents for regulatory and commercialization. From database to delivery, pharmaceutical sponsors, biotech firms, and contract research organizations (CROs) rely on Narrativa® to streamline workflows, decrease costs, and reduce time-to-market across the clinical lifecycle and, more broadly, throughout their entire businesses.

Explore www.narrativa.com and follow on LinkedIn, Facebook, Instagram, and X.